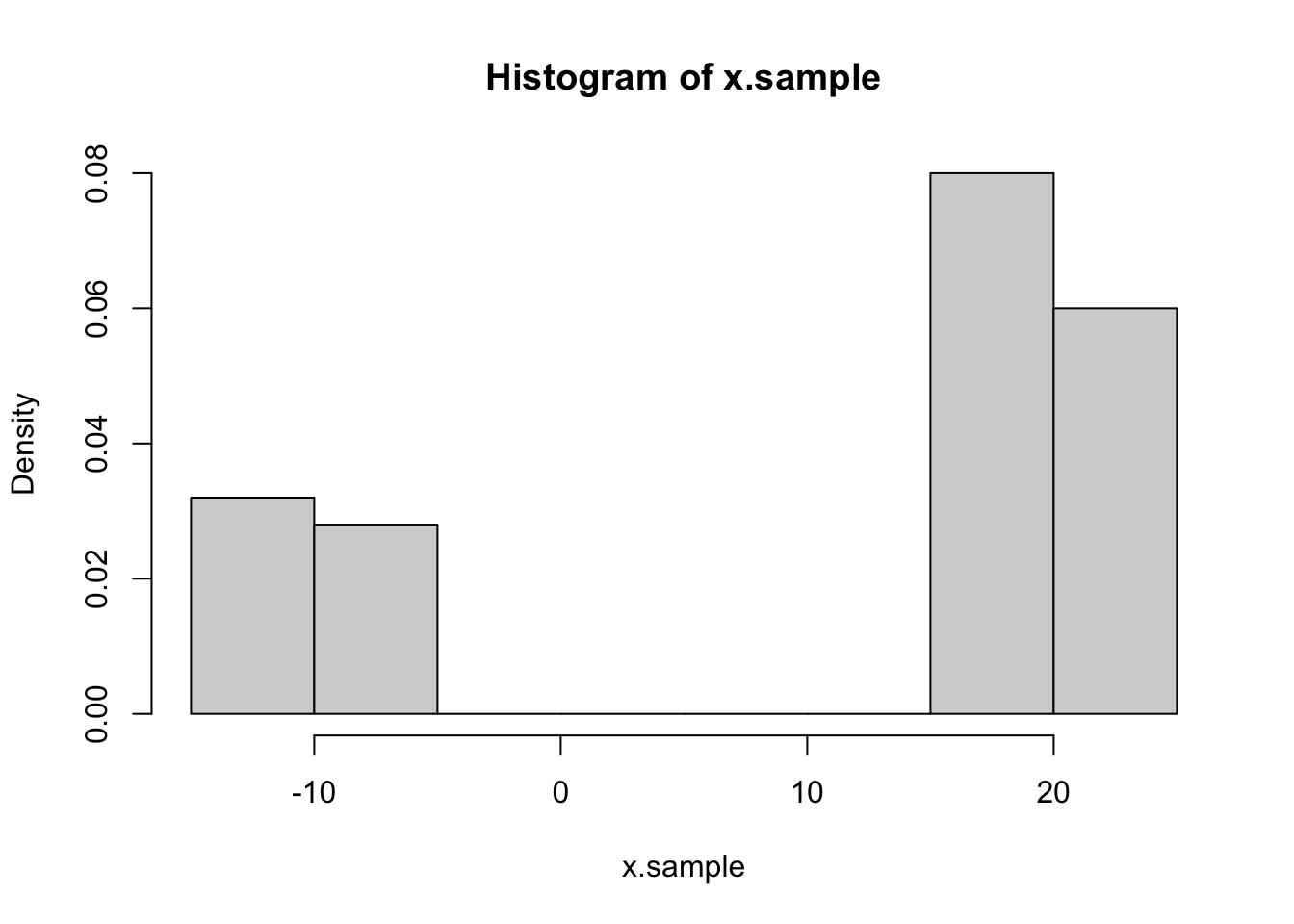

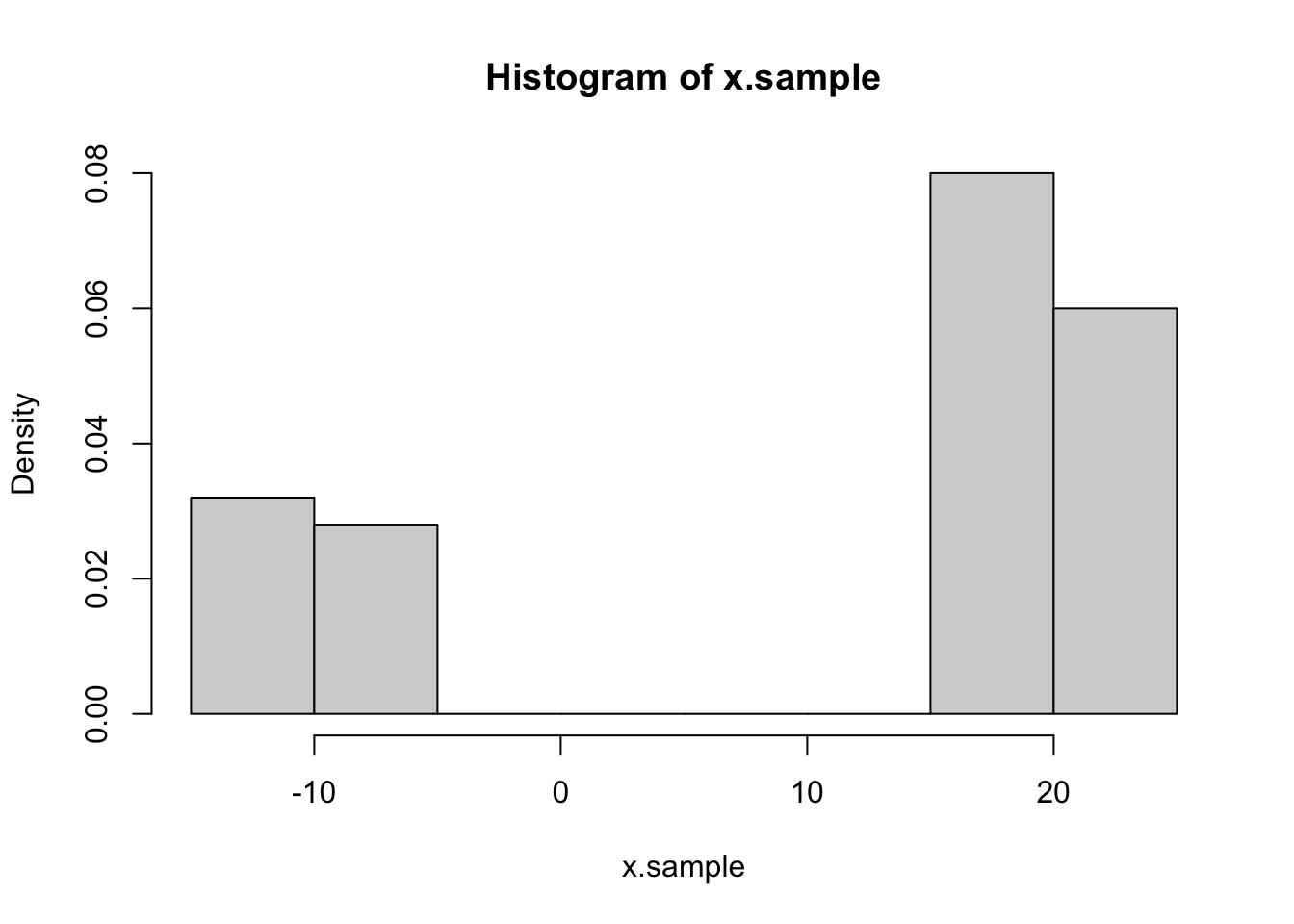

head(x.sample)[1] -8.560997 -8.955152 20.313554 21.465090 19.110296 19.483237hist(x.sample, freq = F, breaks = 10)

EM algorithm is an iterative method to estimate parameter.

Assume you have both unknown parameter and variable to estimate:

Keep iterate between E and M step then reach to the maximum.

Assume you have a random sample from the follow distribution. Try to estimate: \(p, \mu_1, \mu_2, \sigma_1^2, \sigma_2^2\) \[X \sim p N(\mu_1, \sigma_1^2) + (1-p) N(\mu_2, \sigma_2^2)\]

head(x.sample)[1] -8.560997 -8.955152 20.313554 21.465090 19.110296 19.483237hist(x.sample, freq = F, breaks = 10)

The question here is, we don’t know the real parameter thus we can’t distinguish which subdistribution the data exactly. Then we assume \(Y_i \sim Bin(p)\), we have the unknown random variables.

For each sample:

\[ f(x_i, y_i; p, \mu_1, \mu_2, \sigma_1^2, \sigma_2^2) = [ p \frac{1}{\sqrt{2\pi} \sigma_1} e^{-\frac{(x_i-\mu_1)^2}{2\sigma_1^2}} ]^{y_i}[ (1-p) \frac{1}{\sqrt{2\pi} \sigma_2} e^{-\frac{(x_i-\mu_2)^2}{2\sigma_2^2}} ]^{1-y_i} \]

Then the likelihood function is,

\[ L(X, Y; p, \mu_1, \mu_2, \sigma_1^2, \sigma_2^2) = \prod_{i=1}^{n} [ p \frac{1}{\sqrt{2\pi} \sigma_1} e^{-\frac{(x_i-\mu_1)^2}{2\sigma_1^2}} ]^{y_i}[ (1-p) \frac{1}{\sqrt{2\pi} \sigma_2} e^{-\frac{(x_i-\mu_2)^2}{2\sigma_2^2}} ]^{1-y_i} \]

log-likelihood

\[ l(X, Y; p, \mu_1, \mu_2, \sigma_1^2, \sigma_2^2) = \log {L} = \sum_{i=1}^{n}{ {y_i}[ \log{p} -\frac{1}{2}{(\log{2\pi})}-\frac{1}{2} \log{2\sigma_1^2} -\frac{(x_i-\mu_1)^2}{2\sigma_1^2} ]+{(1-y_i)}[ \log{p} -\frac{1}{2}{(\log{2\pi})}-\frac{1}{2} \log{2\sigma_2^2} -\frac{(x_i-\mu_2)^2}{2\sigma_2^2} ]} \]

\[ E[Y_i] = \frac{p e^{-\frac{(x_i-\mu_1)^2}{2\sigma_1^2}}}{p e^{-\frac{(x_i-\mu_1)^2}{2\sigma_1^2}} + (1-p) e^{-\frac{(x_i-\mu_2)^2}{2\sigma_2^2}}} \]

Then let \(y_i = E[Y_i]\), we define

\[ \frac{\partial l}{\partial p} = \sum_{i=1}^{n} {\frac{y_i}{p} - \frac{1-y_i}{1-p}} = 0 \Rightarrow p = \frac{\sum y_i}{n} \]

\[ \frac{\partial l}{\partial \mu_1} = \sum_{i=1}^{n} {y_i \frac{(x_i-\mu_1)}{\sigma_1^2}} = 0 \Rightarrow \mu_1 = \frac{\sum y_ix_i}{\sum y_i} \]

\[ \frac{\partial l}{\partial \sigma_1} = \sum_{i=1}^{n} {y_i (-\frac{1}{2\sigma_1^2} +\frac{(x_i-\mu_1)}{2\sigma_1^4})} = 0 \Rightarrow \sigma_1^2 = \frac{\sum y_i(x_i-\mu_1)^2}{\sum y_i} \]

We can also have

iter.mu1 <- -1

iter.sigma1 <- 1

iter.mu2 <- 15

iter.sigma2 <- 1

iter.p <- 0.5

iter.y <- rep(1, n)

diff <- 1

target <- 1e-10

likelihood.old <- 0

while (diff>target){

g1 <- iter.p * dnorm(x.sample, iter.mu1, iter.sigma1)

g2 <- (1-iter.p) * dnorm(x.sample, iter.mu2, iter.sigma2)

iter.y <- g1/(g1+g2)

iter.p <- sum(iter.y)/n

iter.mu1 <- sum(iter.y * x.sample)/sum(iter.y)

iter.mu2 <- sum((1-iter.y) * x.sample)/sum(1-iter.y)

iter.sigma1 <- sqrt(sum(iter.y * (x.sample - iter.mu1)^2)/sum(iter.y)+ 1e-100)

iter.sigma2 <- sqrt(sum((1-iter.y) * (x.sample - iter.mu2)^2)/sum(1-iter.y)+ 1e-100)

likelihood.new <- sum(

log(iter.y+ 1e-100) + log(iter.p * dnorm(x.sample, iter.mu1, iter.sigma1)+ 1e-100)

)+sum(

log(1-iter.y+ 1e-100) + log((1-iter.p) * dnorm(x.sample, iter.mu2, iter.sigma2)+ 1e-100)

)

diff <- abs(likelihood.new - likelihood.old)

likelihood.old <- likelihood.new

}

# iter.mu1

# iter.sigma1

# iter.mu2

# iter.sigma2

# iter.p

data.frame(

Value = c("Real", "EM"),

p = c(real.p, iter.p),

mu1 = c(real.mu1, iter.mu1),

sigma1 = c(real.sigma1, iter.sigma1),

mu2 = c(real.mu2, iter.mu2),

sigma2 = c(real.sigma2, iter.sigma2)

) Value p mu1 sigma1 mu2 sigma2

1 Real 0.3 -10.000000 1.0000000 20.00000 1.0000000

2 EM 0.3 -9.835749 0.9753299 20.08518 0.9586382iter.mu1 <- -1

iter.sigma1 <- 1

iter.mu2 <- 1

iter.sigma2 <- 1

iter.p <- 0.5

iter.y <- rep(1, n)

diff <- 1

target <- 1e-10

likelihood.old <- 0

while (diff > target) {

# --- E step ---

g1 <- iter.p * dnorm(x.sample, iter.mu1, iter.sigma1)

g2 <- (1 - iter.p) * dnorm(x.sample, iter.mu2, iter.sigma2)

iter.y <- g1 / (g1 + g2)

# 修正:处理 iter.y 中的 NA (防止分母为 0)

iter.y[is.na(iter.y)] <- 0.5

# --- M step ---

iter.p <- sum(iter.y) / n

iter.mu1 <- sum(iter.y * x.sample) / sum(iter.y)

iter.mu2 <- sum((1 - iter.y) * x.sample) / sum(1 - iter.y)

# 更新 sigma 并防止其过小

iter.sigma1 <- sqrt(sum(iter.y * (x.sample - iter.mu1)^2) / sum(iter.y) + 1e-9)

iter.sigma2 <- sqrt(sum((1 - iter.y) * (x.sample - iter.mu2)^2) / sum(1 - iter.y) + 1e-9)

# --- 似然函数评估 ---

# 使用观测数据的对数似然:log(p*f1 + (1-p)*f2)

current_densities <- iter.p * dnorm(x.sample, iter.mu1, iter.sigma1) +

(1 - iter.p) * dnorm(x.sample, iter.mu2, iter.sigma2)

likelihood.new <- sum(log(current_densities + 1e-100))

# 检查是否产生了 NA

if(is.na(likelihood.new)) break

diff <- abs(likelihood.new - likelihood.old)

likelihood.old <- likelihood.new

}

data.frame(

Value = c("Real", "EM"),

p = c(real.p, iter.p),

mu1 = c(real.mu1, iter.mu1),

sigma1 = c(real.sigma1, iter.sigma1),

mu2 = c(real.mu2, iter.mu2),

sigma2 = c(real.sigma2, iter.sigma2)

) Value p mu1 sigma1 mu2 sigma2

1 Real 0.3 -10.000000 1.0000000 20.00000 1.0000000

2 EM 0.3 -9.835749 0.9753299 20.08518 0.9586382# 1. 准备数据

x <- x.sample

n <- length(x)

# 2. 初始化参数 (初值对 EM 收敛很重要)

pi_hat <- 0.5

mu1 <- -1; mu2 <- 1

sigma1_sq <- 1; sigma2_sq <- 1

# 迭代过程

for(iter in 1:100) {

# --- E-step ---

dens1 <- pi_hat * dnorm(x, mu1, sqrt(sigma1_sq))

dens2 <- (1 - pi_hat) * dnorm(x, mu2, sqrt(sigma2_sq))

gamma1 <- dens1 / (dens1 + dens2)

gamma2 <- 1 - gamma1

# --- M-step ---

n1 <- sum(gamma1)

n2 <- sum(gamma2)

pi_hat <- n1 / n

mu1 <- sum(gamma1 * x) / n1

mu2 <- sum(gamma2 * x) / n2

sigma1_sq <- sum(gamma1 * (x - mu1)^2) / n1

sigma2_sq <- sum(gamma2 * (x - mu2)^2) / n2

}

# 输出结果

cat("Final Parameters:\n pi =", pi_hat, "\n mu1 =", mu1, "mu2 =", mu2,

"\n var1 =", sigma1_sq, "var2 =", sigma2_sq)Final Parameters:

pi = 0.3

mu1 = -9.835749 mu2 = 20.08518

var1 = 0.9512684 var2 = 0.9189873data.frame(

Value = c("Real", "EM"),

p = c(real.p, pi_hat),

mu1 = c(real.mu1, mu1),

sigma1 = c(real.sigma1, sqrt(sigma1_sq)),

mu2 = c(real.mu2, mu2),

sigma2 = c(real.sigma2, sqrt(sigma2_sq))

) Value p mu1 sigma1 mu2 sigma2

1 Real 0.3 -10.000000 1.0000000 20.00000 1.0000000

2 EM 0.3 -9.835749 0.9753299 20.08518 0.9586382